File Drawer Problem

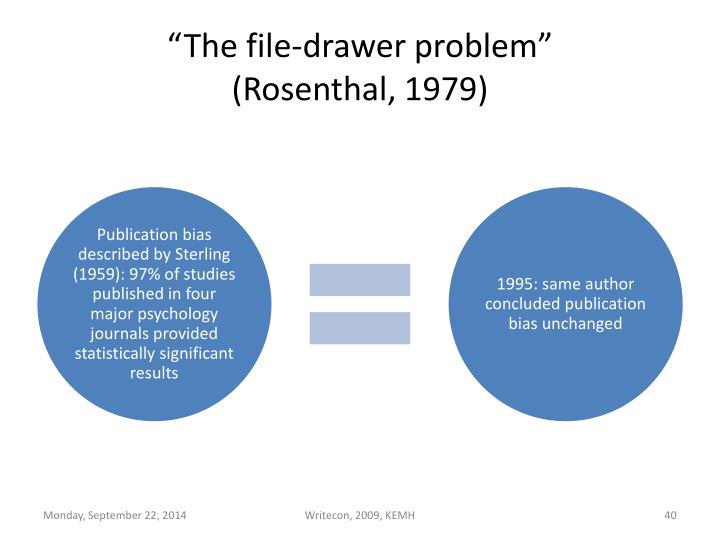

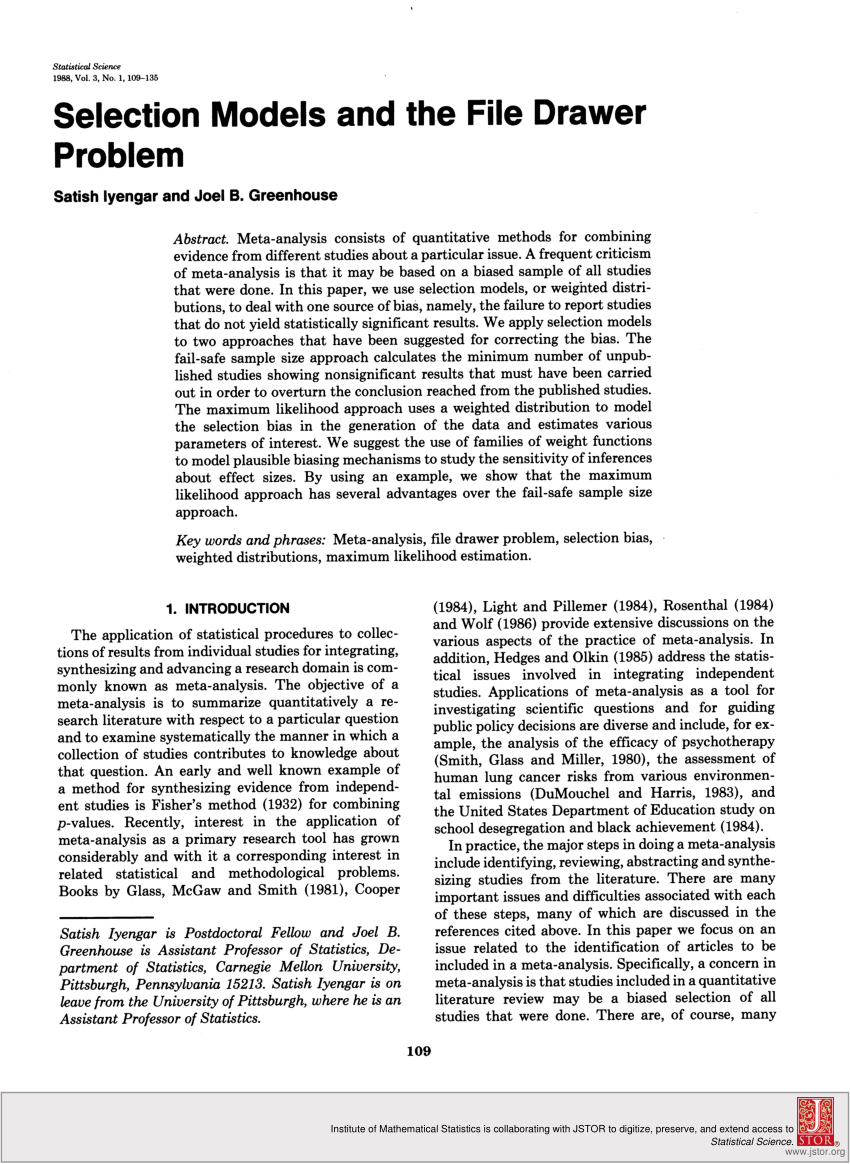

File Drawer Problem - Web in 1979, robert rosenthal coined the term “file drawer problem” to describe the tendency of researchers to publish positive results much more readily than negative results, skewing our ability to discern exactly what an accumulating body of knowledge actually means [1]. Web the file drawer problem is a phenomenon wherein studies with significant results are more likely to be published (rothstein, 2008 ), which can result in an inaccurate representation of the effects of interest. Web selective reporting of scientific findings is often referred to as the “file drawer” problem. Failure to report all the findings of a clinical trial breaks the core value of honesty, trustworthiness and integrity of the researchers. Do the results agree with the expectations of the researcher or sponsor? Web the file drawer problem reflects the influence of the results of a study on whether the study is published. Are the results statistically significant? Web studies that yield nonsignificant or negative results are said to be put in a file drawer instead of being published. Web the file drawer problem (or publication bias) refers to the selective reporting of scientific findings. It describes the tendency of researchers to publish positive results much more readily than negative results, which “end up in the researcher’s drawer.” Do the results agree with the expectations of the researcher or sponsor? Web the file drawer problem is a phenomenon wherein studies with significant results are more likely to be published (rothstein, 2008 ), which can result in an inaccurate representation of the effects of interest. Web in 1979, robert rosenthal coined the term “file drawer problem” to describe the tendency of researchers to publish positive results much more readily than negative results, skewing our ability to discern exactly what an accumulating body of knowledge actually means [1]. Such a selection process increases the likelihood that published results reflect type i errors rather than true population parameters, biasing effect sizes upwards. Web publication bias is also called the file drawer problem, especially when the nature of the bias is that studies which fail to reject the null hypothesis (i.e., that do not produce a statistically significant result) are less likely to be published than those that do produce a statistically significant result. Are the results statistically significant? It describes the tendency of researchers to publish positive results much more readily than negative results, which “end up in the researcher’s drawer.” It describes the tendency of researchers to publish positive results much more readily than negative results, which “end up in the researcher’s drawer.” Web the file drawer problem reflects the influence of the results of a study on whether the study is published. Some things to consider when deciding to publish results are: Some things to consider when deciding to publish results are: Are the results practically significant? Failure to report all the findings of a clinical trial breaks the core value of honesty, trustworthiness and integrity of the researchers. Web in 1979, robert rosenthal coined the term “file drawer problem” to describe the tendency of researchers to publish positive results much more. Web selective reporting of scientific findings is often referred to as the “file drawer” problem. Failure to report all the findings of a clinical trial breaks the core value of honesty, trustworthiness and integrity of the researchers. Such a selection process increases the likelihood that published results reflect type i errors rather than true population parameters, biasing effect sizes upwards.. Web the file drawer problem (or publication bias) refers to the selective reporting of scientific findings. Failure to report all the findings of a clinical trial breaks the core value of honesty, trustworthiness and integrity of the researchers. Web the file drawer problem is a phenomenon wherein studies with significant results are more likely to be published (rothstein, 2008 ),. This term suggests that results not supporting the hypotheses of researchers often go no further than the researchers' file drawers, leading to a bias in published research. Failure to report all the findings of a clinical trial breaks the core value of honesty, trustworthiness and integrity of the researchers. Are the results statistically significant? Some things to consider when deciding. This term suggests that results not supporting the hypotheses of researchers often go no further than the researchers' file drawers, leading to a bias in published research. Are the results practically significant? Web the file drawer problem (or publication bias) refers to the selective reporting of scientific findings. Failure to report all the findings of a clinical trial breaks the. Do the results agree with the expectations of the researcher or sponsor? Some things to consider when deciding to publish results are: Failure to report all the findings of a clinical trial breaks the core value of honesty, trustworthiness and integrity of the researchers. It describes the tendency of researchers to publish positive results much more readily than negative results,. Web studies that yield nonsignificant or negative results are said to be put in a file drawer instead of being published. Failure to report all the findings of a clinical trial breaks the core value of honesty, trustworthiness and integrity of the researchers. Web the file drawer problem is a phenomenon wherein studies with significant results are more likely to. Web the file drawer problem (or publication bias) refers to the selective reporting of scientific findings. Failure to report all the findings of a clinical trial breaks the core value of honesty, trustworthiness and integrity of the researchers. Are the results practically significant? Web selective reporting of scientific findings is often referred to as the “file drawer” problem. Web the. Such a selection process increases the likelihood that published results reflect type i errors rather than true population parameters, biasing effect sizes upwards. Web the file drawer problem (or publication bias) refers to the selective reporting of scientific findings. Some things to consider when deciding to publish results are: It describes the tendency of researchers to publish positive results much. Web in 1979, robert rosenthal coined the term “file drawer problem” to describe the tendency of researchers to publish positive results much more readily than negative results, skewing our ability to discern exactly what an accumulating body of knowledge actually means [1]. Web publication bias is also called the file drawer problem, especially when the nature of the bias is. It describes the tendency of researchers to publish positive results much more readily than negative results, which “end up in the researcher’s drawer.” Web the file drawer problem (or publication bias) refers to the selective reporting of scientific findings. Web publication bias is also called the file drawer problem, especially when the nature of the bias is that studies which fail to reject the null hypothesis (i.e., that do not produce a statistically significant result) are less likely to be published than those that do produce a statistically significant result. Are the results statistically significant? Web the file drawer problem is a phenomenon wherein studies with significant results are more likely to be published (rothstein, 2008 ), which can result in an inaccurate representation of the effects of interest. Are the results practically significant? Web studies that yield nonsignificant or negative results are said to be put in a file drawer instead of being published. Do the results agree with the expectations of the researcher or sponsor? This term suggests that results not supporting the hypotheses of researchers often go no further than the researchers' file drawers, leading to a bias in published research. Web the file drawer problem (or publication bias) refers to the selective reporting of scientific findings. Some things to consider when deciding to publish results are: Such a selection process increases the likelihood that published results reflect type i errors rather than true population parameters, biasing effect sizes upwards. Failure to report all the findings of a clinical trial breaks the core value of honesty, trustworthiness and integrity of the researchers.[PDF] Using the Comparative Method in Democratic Theory A Solution to

PPT Declaration of Helsinki PowerPoint Presentation ID4691236

13. "Negative Data" and the File Drawer Problem YouTube

(PDF) Selection Models and the File Drawer Problem

File drawer talk

file drawer problem

PPT Formative assessment in mathematics opportunities and challenges

Reporting all results efficiently A RARE proposal to open up the file

What does filedrawer problem mean? YouTube

File Drawer Problem Fragility Vaccine

Web Selective Reporting Of Scientific Findings Is Often Referred To As The “File Drawer” Problem.

Web The File Drawer Problem Reflects The Influence Of The Results Of A Study On Whether The Study Is Published.

Web In 1979, Robert Rosenthal Coined The Term “File Drawer Problem” To Describe The Tendency Of Researchers To Publish Positive Results Much More Readily Than Negative Results, Skewing Our Ability To Discern Exactly What An Accumulating Body Of Knowledge Actually Means [1].

It Describes The Tendency Of Researchers To Publish Positive Results Much More Readily Than Negative Results, Which “End Up In The Researcher’s Drawer.”

Related Post:

![[PDF] Using the Comparative Method in Democratic Theory A Solution to](https://i1.rgstatic.net/publication/352539888_Using_the_Comparative_Method_in_Democratic_Theory_A_Solution_to_the_File_Drawer_Problem/links/61a685cc85c5ea51abc0ea39/largepreview.png)